We Audited Our Own Site. Here Is What We Found and Fixed.

The cobbler's children problem

We sell AEO optimization. We build llms.txt files for clients. We implement schema markup on every site we deliver. And when we ran a full SEO audit on commerceking.ai, we found 27 issues on our own site.

We did not have an llms.txt file. Our Organization schema pointed to a logo file that did not exist. Our Spanish homepage was rendering English FAQ data in the structured markup. The www subdomain served a full duplicate of the site instead of redirecting.

Every agency has this problem. You spend all your time building for clients and your own site gets neglected. We decided to fix that publicly because transparency is part of how we operate.

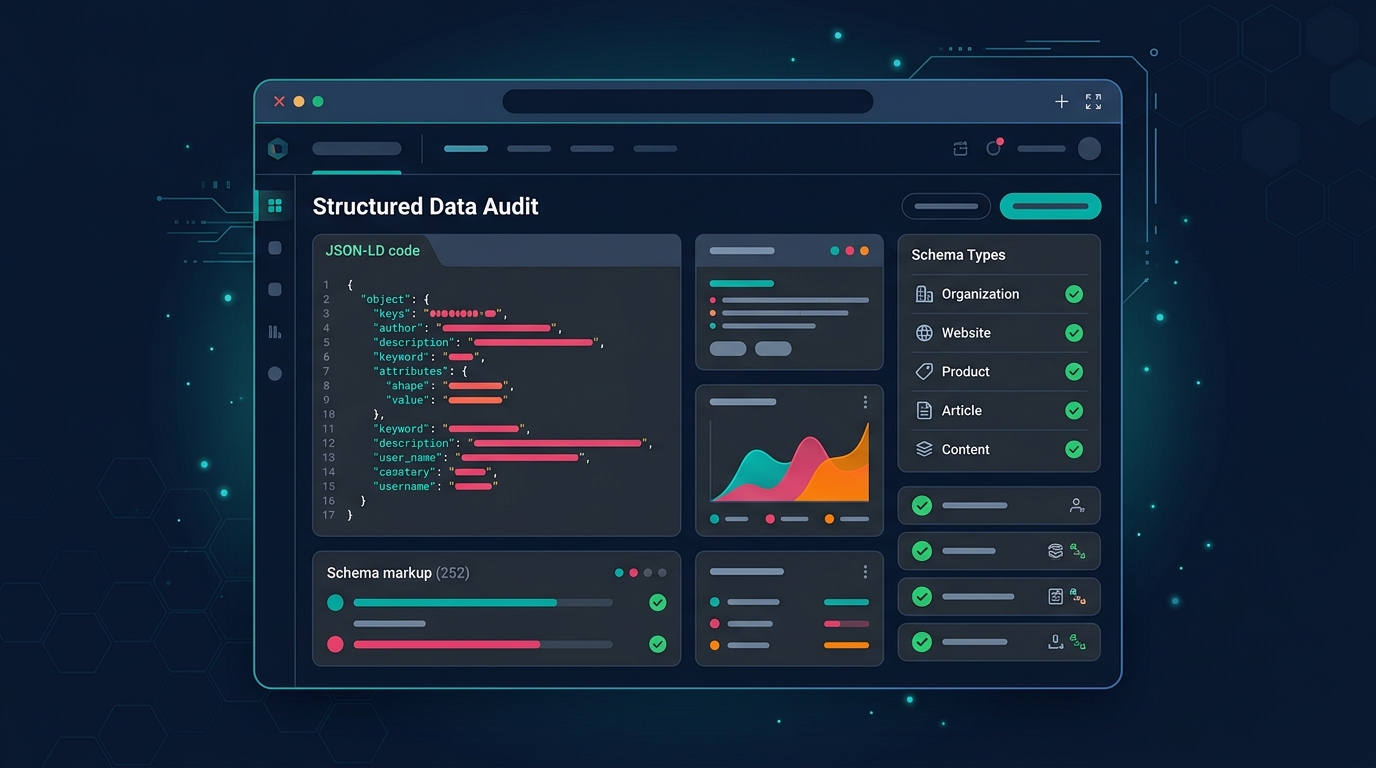

What the audit found

We ran seven parallel audits: technical SEO, content quality, schema markup, sitemap validation, performance, visual/mobile, and AI search readiness (GEO). The overall health score came back at 68 out of 100. Not terrible, but not where an AEO agency should be.

The critical issues

No llms.txt file. This is the file that tells AI crawlers (ChatGPT, Claude, Perplexity) who you are and what you do. We literally sell this as a service. Not having one on our own site was the most embarrassing finding in the audit.

The Open Graph image reference was broken. Every page pointed to social-card.png, but the actual file was named opengraph.jpg. Every social share showed a broken preview.

The Spanish homepage had English FAQ content in its structured data. We have a bilingual site (English and Spanish) and the schema markup was pulling from the wrong data file.

The technical issues

Zero security headers. No HSTS, no Content-Security-Policy, no X-Content-Type-Options. We added all six recommended headers through an Amplify customHeaders.yml configuration.

The www subdomain was serving the full site with HTTP 200 instead of redirecting to the canonical non-www domain. We fixed this with a single AWS CLI command to add a 301 redirect rule in Amplify.

Hreflang tags had trailing slash mismatches. The hreflang for the Spanish site pointed to /es (no slash) while the canonical URL was /es/ (with slash). Google treats these as different URLs.

Google Fonts was loaded as a render-blocking cross-origin request with no preconnect hint. We added preconnect for Google Fonts, gstatic, and HubSpot, then dropped an unused font weight to reduce download size.

The content issues

Two pages were critically thin. The services index had 120 words (a card grid with no context). The ecommerce service page had 250 words (a platform list with no explanation of how we choose platforms or run migrations). We expanded both to 500+ and 900+ words respectively.

The hero stat said "10+ years in commerce" while the about page said "16+ years." We picked the accurate number and made it consistent.

What we built

llms.txt

We created a structured llms.txt file at commerceking.ai/llms.txt that describes who we are, what we do, our team credentials, the platforms we work with, and our brand ecosystem. Any AI system crawling our site now has a clean, structured summary to work with.

AI crawler management

We updated robots.txt with explicit rules for AI crawlers. Search-oriented crawlers (GPTBot, ClaudeBot, PerplexityBot) are explicitly allowed. Training-only crawlers (CCBot, Google-Extended) are blocked. This gives us control over how AI models consume our content without blocking the systems that cite us in search results.

Schema markup on every page

We added structured data to nine pages that had none. AboutPage schema with Person markup for all four team members. ContactPage schema. Service schema and BreadcrumbList on seven service pages. Blog posts now generate Article schema with author, date, and publisher data.

Bilingual form system

We replaced a "coming soon" placeholder on the contact page with a real HubSpot form. The English site gets the English form. The Spanish site gets a separate Spanish form. The submit button is branded in our CTA orange.

Performance improvements

Preconnect hints for three external domains. Dropped an unused font weight. Blog hero images now have fetchpriority="high" and explicit dimensions for CLS prevention. The site already scored well on Core Web Vitals (text-first Astro static site), but these changes tighten the margins.

The result

We fixed 27 issues across two days of focused work. The site now has an llms.txt file, explicit AI crawler rules, schema markup on every page, security headers, a proper www redirect, bilingual HubSpot forms, and expanded content on the two thinnest pages.

None of this required a redesign. None of it changed the visual appearance of the site. These are the invisible infrastructure improvements that determine whether AI systems can find you, understand you, and cite you in search results.

This is the same process we run for every client. The difference is we did it on our own site and wrote about it so you can see exactly what the work looks like.

What is still on the list

Case studies with real project examples. Client testimonials. Self-hosted fonts instead of Google Fonts. Page-specific Open Graph images instead of one shared default. These are on the roadmap. We will write about those too when they ship.